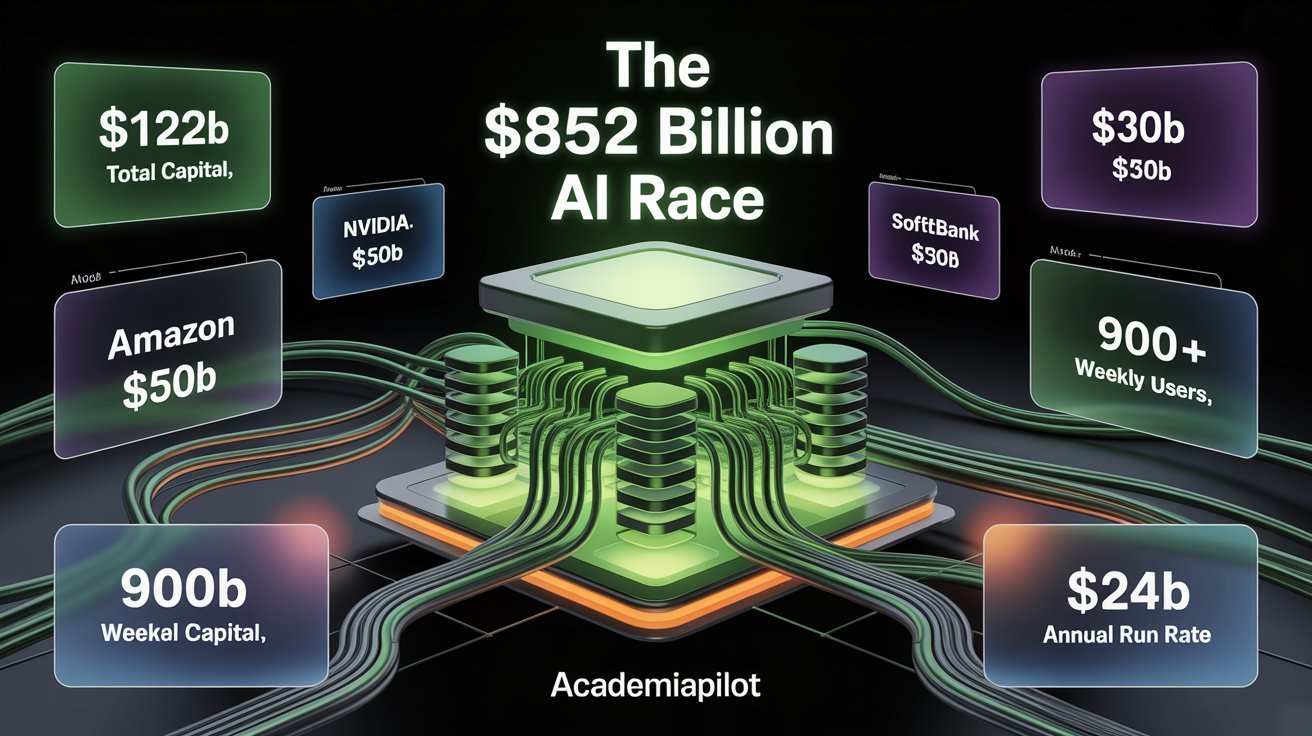

OpenAI Raises $122 Billion at $852B Valuation: What It Actually Means for Developers, Prices & the AI Race

The inside breakdown of OpenAI's record-breaking funding round. Why Amazon invested $50B, what it means for API pricing trajectories, the IPO reality check, and how developers should restructure their stacks to survive the AI Superapp era.

What Just Happened: Breaking Down the Record

Let's start with hard context. $122 billion in a single private raise is not just a record by degree — it is a record by an order of magnitude. The previous largest AI funding round was OpenAI's own $40 billion close in early 2025. Before that, SoftBank's Vision Fund era produced the largest checks the private markets had seen: Uber's $8.1B raise, WeWork's ill-fated billions, ByteDance at roughly $3B. None of them come close.

The $852 billion valuation places OpenAI above all but a handful of public companies globally — roughly the market cap of Berkshire Hathaway, and larger than Visa, JPMorgan Chase, or Samsung. This is a company that has never turned a profit, burning through capital at a rate that requires perpetual fundraising, valued on the assumption that everything goes right.

Here are the verified numbers and critical capital distributions:

Amazon

$35B contingent on IPO or attaining Artificial General Intelligence (AGI).

Nvidia

Committed entirely as dedicated GPU compute capacity, not cash.

SoftBank

Payout scattered across 3 installments: April, July, and October 2026.

SoftBank co-led the round alongside Andreessen Horowitz, D.E. Shaw Ventures, MGX, TPG, and T. Rowe Price Associates, with participation from Amazon, Nvidia, and Microsoft. About $3 billion came from individual investors via bank channels.

There was also significant participation from a diverse set of global institutions including Altimeter, Appaloosa LP, ARK Invest, affiliated funds of BlackRock, Blackstone, Coatue, D1 Capital Partners, Dragoneer, Fidelity Management & Research Company, Insight Partners, Sequoia Capital, Temasek, and Thrive Capital, among others.

The breadth of that list is as significant as the dollar amount. This is not a concentrated bet from a few believers — it is the entire institutional capital stack signaling alignment behind OpenAI as critical infrastructure.

The Hidden Mechanics: What $122 Billion Actually Means in Real Cash

This is where most coverage stops at the headline and misses the nuance. Every serious developer and builder needs to understand the structural composition of this round.

Nvidia's $30 billion contribution is not cash at all. It is dedicated GPU compute capacity — hardware infrastructure commitments that OpenAI can use to train and run its models. You cannot pay salaries or cover operating expenses with GPUs. SoftBank's $30 billion is structured in installments — three tranches of $10 billion each, arriving in April, July, and October 2026. The phased structure is deliberate. It gives SoftBank the ability to evaluate OpenAI's progress before deploying the full amount.

A large portion of Amazon's investment — $35 billion — is contingent on OpenAI going public or achieving artificial general intelligence.

Add it up and the actual immediate liquidity OpenAI received from this record-breaking round is approximately $25 billion — $15 billion from Amazon and the first $10 billion tranche from SoftBank. That $25 billion in real cash lands against a brutal cost structure. OpenAI is projected to lose $14 billion in 2026 alone. Losses are expected to escalate to $35 billion by 2027 and $45 billion by 2028 as infrastructure spending compounds.

This is not a critique — it is a structural reality of building frontier AI. The capital burn is intentional. The bet is that compute and talent investment today produces a defensible infrastructure moat that generates asymmetric returns post-IPO. But developers and founders building on top of this infrastructure need to hold this picture clearly in mind: OpenAI is not in a comfortable financial position. It is in a calculated, time-pressured race against its own burn rate and its competitors' capabilities.

The Amazon Contingency Clause: What It Signals

The $35 billion contingency tied to IPO or AGI achievement deserves its own moment. Amazon's investment is effectively structured as a conditional bet: if OpenAI fails to go public or fails to demonstrate AGI-level capability, Amazon retains optionality. This protects Amazon's downside while giving OpenAI the headline number. It also tells you something about how Amazon views the risk profile of this investment — not as a safe infrastructure bet, but as a high-conviction, high-risk strategic position.

For developers, the Amazon angle matters because it reinforces OpenAI's AWS integration trajectory. Expect tighter CloudFront and SageMaker-level integrations with OpenAI APIs as part of the strategic partnership terms that likely accompany this investment.

The Superapp Vision: OpenAI's Play for Your Entire Workflow

OpenAI stated directly: "That is why we are building a unified AI superapp. As models become more capable, the limiting factor shifts from intelligence to usability. Users do not want disconnected tools. They want a single system that can understand intent, take action, and operate across applications, data, and workflows. Our superapp will bring together ChatGPT, Codex, browsing, and our broader agentic capabilities into one agent-first experience."

OpenAI also called itself an "AI superapp," making it clear that it wants to own the primary interface for how people use AI.

This is not incremental product evolution. It is a direct declaration of platform ambition that every developer building on OpenAI's infrastructure needs to internalize. The superapp model collapses the distinction between:

- Consumer interface (ChatGPT as daily driver)

- Developer tooling (Codex as coding agent)

- Enterprise workflow (Operator-level agentic automation)

- Browsing and research (Atlas browser integration)

- Creative production (Sora, DALL·E, and audio generation)

The analogies are not flattering to independent builders. WeChat's superapp consolidation in China effectively killed dozens of standalone messaging, payments, and ecommerce apps. Facebook's platform consolidation in 2012–2016 created, then crushed, an entire ecosystem of social apps that built on its social graph. OpenAI knows this pattern intimately — and they are explicitly choosing it.

By unifying surfaces, OpenAI can translate advances in model capability directly into user adoption and engagement. The company's consumer scale becomes the front door for enterprise usage, as familiarity in daily life drives adoption at work.

Read deeper: Agentic Development 2.0 & OpenAI Codex

The GPT-5.4 Engine: What's Actually Driving the Numbers

The funding story cannot be separated from the product story. GPT-5.4 is driving record engagement across agentic workflows, and OpenAI's APIs now process more than 15 billion tokens per minute.

GPT-5.4 is OpenAI's first mainline reasoning model that incorporates the frontier coding capabilities of GPT-5.3-Codex. It supports up to 1 million tokens of context, allowing agents to plan, execute, and verify tasks across long horizons. GPT-5.4 is also the first general-purpose model with native, state-of-the-art computer-use capabilities, enabling agents to operate computers and carry out complex workflows across applications.

In practical terms for developers, this means:

GPT-5.4 brings the coding capabilities of GPT-5.3-Codex to OpenAI's flagship frontier model. Developers can generate production-quality code, build polished front-end UI, follow repo-specific patterns, and handle multi-file changes with fewer retries.

GPT-5.4 is priced at approximately $10 per million input tokens and $30 per million output tokens — significantly cheaper than Claude Opus 4.6 while delivering comparable benchmark performance. At $30 per million output tokens versus $75, the cost difference is substantial at scale.

Codex now serves over 2 million weekly users, up 5x in the past three months, with usage growing more than 70% month over month.

These numbers matter because they validate the business case that underlies the $852B valuation. This is not speculation about future capability — it is a demonstrated flywheel already in motion.

Also read: Agentic IDEs vs Browser Builders (2026)

The Business Composition Shift: Enterprise Is Now the Real Story

The AI giant's enterprise business now makes up 40% of revenue (up from around 30% last year) and is "on track to reach parity with consumer by the end of 2026."

This is the signal that most commentary has missed. The popular narrative frames OpenAI as a consumer chatbot company. The financial reality is increasingly that of a B2B infrastructure provider — and that changes everything about the competitive dynamics, pricing strategy, and platform risk calculation.

When enterprise reaches revenue parity with consumer, OpenAI's negotiating position with large companies becomes structurally different. Enterprise contracts are longer, stickier, and require more customization — which means more lock-in, more integration depth, and significantly higher switching costs than consumer subscriptions.

What This Means for Developers: A Detailed Breakdown

API Pricing: The Window Is Open, But Read the Incentive Structure

Right now, developers building on OpenAI APIs are operating in historically favorable pricing conditions. A single agentic task in GPT-5.4 might involve 3–5 API calls, with complex agentic tasks with debugging running $0.48–$0.72 per task sequence. The trend suggests continued price decreases in the 6–12 month window.

That direction is likely to reverse on a specific timeline. Here is the honest pricing trajectory developers should model for:

API Pricing Risk Timeline

Pre-IPO

FavorableNow through Q4 2026Pricing holds steady or dips modestly. OpenAI's incentive is volume — they need MAU growth, developer adoption, and ARR momentum to tell a clean IPO story. Aggressive API pricing is a user acquisition cost, not a revenue line.

IPO + 6 months

Margin PressureMid 2027Public market shareholders immediately demand margin expansion. The period of "API pricing as a growth subsidy" ends. Expect structural price increases on flagship models (GPT-5.4 and successors).

24 months post-IPO

Value ExtractionLate 2028If the AWS playbook holds, enterprise lock-in reaches the point where pricing can increase without significant churn. At that point, the question is no longer "will prices go up?" but "how much."

The developer action item: Architect for model abstraction today. Your application's dependency on specific OpenAI model behaviors should be isolated behind an interface layer that can swap providers. This is not theoretical — it is the same infrastructure decision that cost companies millions when AWS changed pricing or deprecated services.

Check out: ChatGPT vs Claude vs Gemini 2026

The Platform Risk Calculation Has Changed

ChatGPT has 6x the monthly web visits and mobile sessions than the next largest AI app, while total AI time spent is 4x the next largest AI app and 4x all others combined.

At this scale, OpenAI is not just an API provider. It is a platform — with all the implications that carries. OpenAI is currently transitioning into value extraction mode. The $122B raise accelerates this transition. When you have $25B+ in liquid capital and Nvidia's GPUs dedicated to your compute stack, you can build features fast — features that may directly compete with the apps your developers are building on your platform today.

Dive deeper: The AI Bubble: Evidence, Timeline, and Developer Guide

Compute Availability and the Rate Limit Equation

Here is the practical near-term upside for developers that gets underreported: the compute bottleneck is finally breaking open.

OpenAI and Amazon announced a multi-year strategic partnership to accelerate AI innovation, expanding collaboration with NVIDIA, including 3 GW of dedicated inference capacity and 2 GW of training on Vera Rubin systems, building on Hopper and Blackwell systems already in operation.

Translating that infrastructure language: 5 GW of combined capacity means:

- Rate limits on Tier 1–3 API tiers will expand meaningfully through Q3 2026

- Real-time multimodal endpoints (voice, vision, computer use) will scale to production reliability

- Fine-tuning queue times will move toward near-real-time provisioning by Q4 2026

How the Competitive Landscape Shifts

Google's Uncomfortable Position

OpenAI's search usage has nearly tripled in a year, and its ads pilot reached more than $100 million in ARR in under six weeks. That last data point is the most alarming for Google. If even 15% of Google's current search queries migrate to AI-native interfaces over the next three years, the advertising revenue impact is measured in tens of billions annually.

Anthropic: The Differentiation Dividend

Paradoxically, OpenAI's scale is becoming a strategic gift to Anthropic. As OpenAI consolidates into a consumer superapp and an enterprise platform simultaneously, the space for a "not-OpenAI" alternative in enterprise expands. Large enterprises have deep concerns about vendor concentration risk. Anthropic's positioning as safety-first, enterprise-grade, and deliberately not pursuing a consumer superapp strategy becomes more defensible.

The Open-Source Pressure Valve

One underreported consequence of this raise: it will dramatically accelerate open-source AI investment globally. Enterprise buyers hedge against monopolistic scale. Expect sovereign AI investment from the EU and China to roughly double over the next 24 months as a direct response.

The IPO Reality Check: What Actually Has to Happen

OpenAI's late 2026 IPO target is real, but the path is narrower than the headline suggests. The $24B annualized revenue run rate is incredible growth, but the path to profitability runs entirely through the compute cost curve.

Here is the objective status of the critical IPO prerequisites:

Note: Non-profit restructuring and regulatory clearance represent the highest structural hurdles to a 2026 listing.

The biggest hidden risk is the Governance Restructuring Problem. The non-profit's $180B stake is actually a structural complication for the IPO: any restructuring that dilutes or redirects that stake faces potential legal challenge from the original charitable mission.

The Bottom Line: What Every Developer and Builder Should Do Right Now

OpenAI's $122 billion raise is not a story about venture capital exuberance or Silicon Valley hype cycles. It is a story about infrastructure capture at civilizational scale, executed with unprecedented financial backing and a clear-eyed understanding of the platform lifecycle.

The expected actions for builders:

- Ship aggressively now. The combination of powerful APIs, competitive pricing, and 900M potential users is a window that will not repeat. Use it.

- Build with abstraction. Never let any single provider own more than 70% of your critical AI functionality without a tested fallback.

- Go vertical, not horizontal. The superapp will eat horizontal productivity AI. Domain-specific, compliance-heavy, or operationally complex verticals are safer and more defensible.

- Model for post-IPO pricing. Your 2027–2028 unit economics should assume API costs materially higher than today's.

The capital is real. The vision is coherent. The risk is concentrated. Build accordingly.

Frequently Asked Questions

Common questions about this topic

Related Articles

GitHub Agent HQ: Multi-Agent Platform Architecture Explained

GitHub Agent HQ is the multi-agent coding platform redefining software development in 2026. Full architecture breakdown, enterprise controls, CI/CD integration, and implementation framework.

ChatGPT Ads Invasion & How to Fight Back

The AI you loved is now selling you stuff. OpenAI flipped the switch on ads in ChatGPT. Here is the full story, leaked details, and why it matters.

Agentic Development 2.0 & OpenAI Codex

Why does this matter? OpenAI's native Codex App for macOS marks the shift from AI autocomplete to managing autonomous agent swarms that code while you sleep.